Run Qwen in LM Studio

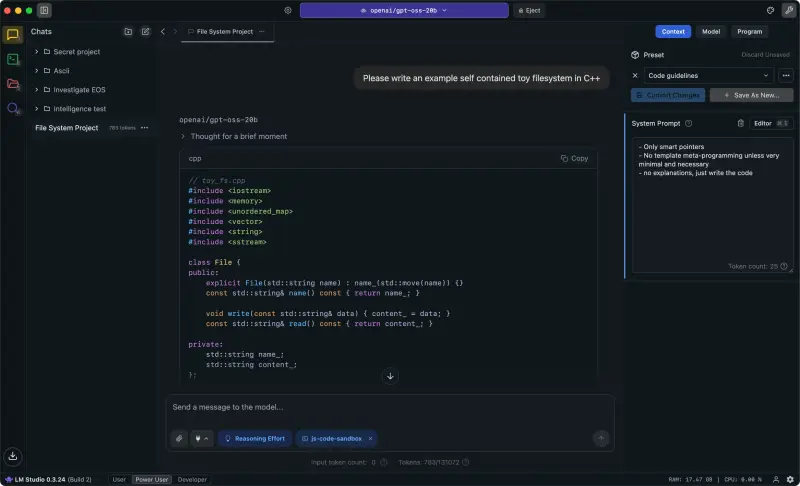

LM Studio is the fastest way to get Qwen running on your own machine without touching a terminal. Download a model, click Chat, start prompting. It handles quantization formats, memory management, and even serves an OpenAI-compatible API — all through a clean desktop interface that works on macOS, Windows, and Linux.

If you've tried other ways to run Qwen locally and bounced off the command line, this is your path. LM Studio wraps llama.cpp and MLX under a GUI that auto-detects your hardware, suggests models that fit your RAM, and lets you swap between backends with two clicks. On Apple Silicon Macs, it unlocks MLX — a backend that runs Qwen models 25-50% faster than standard GGUF with roughly half the memory footprint.

One caveat upfront: LM Studio's thinking mode toggle for Qwen 3.5 models has a known reliability issue (GitHub #1559). We cover the workarounds below — they're straightforward once you know about them, but you should know about them before you start.

In This Guide

Installing LM Studio

Three platforms, three install methods. Pick yours:

| Platform | How to Install | Notes |

|---|---|---|

| macOS | Download from lmstudio.ai or brew install --cask lm-studio |

Apple Silicon only. Intel Macs aren't supported. |

| Windows | MSI installer from lmstudio.ai | Requires a GPU with Vulkan support for acceleration. |

| Linux | AppImage from lmstudio.ai | CUDA and Vulkan backends available. |

If you prefer the CLI, LM Studio also ships lms — a command-line tool that can pull models directly. After installing the desktop app, open a terminal and run:

lms get qwen/qwen3.5-9bThat downloads the model and makes it available in the GUI immediately. Useful if you already know which model you want and don't feel like browsing the catalog.

Finding and Downloading Qwen Models

Open LM Studio and hit Cmd+Shift+M (Mac) or Ctrl+Shift+M (Windows) to jump straight to the model catalog. Type "Qwen 3.5" in the search bar. You'll see dozens of results — different sizes, different quantization formats, from different uploaders.

Here's what you actually need to know about sizing. The table below shows each Qwen 3.5 variant with its download size and the RAM you'll need to run it:

| Model | Download Size | RAM Required | Best For |

|---|---|---|---|

| 0.8B | ~0.5 GB | 2 GB | Testing, embedded devices |

| 2B | ~1.5 GB | 4 GB | Light tasks, older laptops |

| 4B | ~2.5 GB | 6 GB | Everyday chat, summarization |

| 9B | ~5.5 GB | 7 GB | Sweet spot — strong reasoning, fits most machines |

| 27B | ~16 GB | 20 GB | Advanced reasoning, long context |

| 35B-A3B (MoE) | ~21 GB | 22 GB | Near-27B quality, faster inference |

| 122B-A10B (MoE) | ~65 GB | 80 GB | Maximum local quality, needs serious hardware |

Our pick for most users: the 9B. It fits in 7 GB of RAM, runs comfortably on any recent Mac or a Windows machine with 8+ GB VRAM, and its reasoning quality punches well above its weight class. If you have 24+ GB of unified memory on an Apple Silicon Mac, the 27B is worth the upgrade — noticeably smarter on complex prompts.

Beyond Qwen 3.5, LM Studio also carries Qwen3, Qwen2.5-Coder, QwQ-32B, and Qwen3-VL (vision). Search for any of these by name. For the coding-specific models, see our Qwen Coder guide.

A tip on choosing uploads: filter results by "MLX" if you're on a Mac or "GGUF" for cross-platform compatibility. Look for quants from Unsloth or bartowski — these are community-trusted quantizers who consistently produce high-quality conversions. Not sure if your hardware can handle a specific size? Run it through our Can I Run Qwen? tool before downloading.

MLX vs GGUF on Mac: Why the Backend Matters

This is the single most important setting for Mac users, and most people miss it.

LM Studio supports two inference backends on Apple Silicon: GGUF (via llama.cpp, cross-platform) and MLX (Apple's own ML framework, Silicon-only). The performance gap between them isn't subtle — it's the difference between a model feeling sluggish and feeling responsive.

| Feature | GGUF (llama.cpp) | MLX |

|---|---|---|

| Token generation speed | Baseline | 25-50% faster (up to 2x for MoE models) |

| Memory usage | Higher | ~50% less |

| Prompt processing | Standard | 3-5x faster |

| Platform support | All (Mac, Windows, Linux) | Apple Silicon Macs only |

| Quantization options | More variety (Q2 through Q8, K-quants) | 4-bit, 6-bit, 8-bit, BF16 |

The numbers speak for themselves. MLX uses Apple's unified memory architecture natively — no translation layers, no compatibility shims. GGUF works everywhere, which is its strength, but on Apple Silicon it's running through a compatibility layer that costs you speed and memory.

Bottom line: if you're on an Apple Silicon Mac, always use MLX. The only reason to choose GGUF on a Mac is if you need a specific quantization level that MLX doesn't offer (rare) or you're testing cross-platform compatibility.

For MoE models like Qwen 3.5-35B-A3B, the gap is even wider. MLX can hit 2x the speed of GGUF on these architectures because it handles sparse activation patterns more efficiently. If you're running any MoE variant, MLX isn't just recommended — it's practically mandatory for a decent experience.

How to Switch Backends in LM Studio

Click the Chat icon in the sidebar, then select your loaded model at the top. In the model settings panel, look for Hardware Acceleration and switch it to MLX. LM Studio will reload the model with the new backend. You'll see the speed difference on your very first prompt.

If you don't see MLX as an option, make sure you've downloaded an MLX-format model — not a GGUF file. Go back to the catalog, filter by "MLX," and grab the right version. For a deeper look at running Qwen on Apple's ML framework outside of LM Studio, see our dedicated MLX guide.

Thinking Mode: The Known Issue and How to Fix It

Qwen 3.5 models support a "thinking" mode where the model reasons step by step before answering — similar to chain-of-thought prompting, but built into the model itself. The problem: LM Studio's thinking mode toggle doesn't reliably control this. It's a known issue (GitHub #1559) and it affects all Qwen 3.5 models in LM Studio.

What happens in practice: you toggle thinking off in the UI, but the model still generates hundreds of invisible <think> tokens. Your response takes 3-5x longer than it should. The output looks normal, but the model burned through 800+ hidden tokens before giving you an answer. Wasteful on small models. Painful on large ones.

Three workarounds, pick whichever fits your workflow:

Option 1: CLI Override

If you use LM Studio's CLI tool, you can force thinking off at the command level:

lms chat --model qwen3.5-9b --config '{"enable_thinking": false}'This bypasses the UI toggle entirely. Clean and reliable.

Option 2: API Parameter

When calling LM Studio's API (more on this below), pass the thinking flag in the request body:

response = client.chat.completions.create(

model="qwen3.5-9b",

messages=[{"role": "user", "content": "Summarize this document."}],

extra_body={"chat_template_kwargs": {"enable_thinking": False}}

)This works consistently. If you're routing requests through LM Studio's API server for coding agents or other tools, this is the method to use.

Option 3: Template Override

In LM Studio's model settings, you can edit the prompt template directly. Add this line at the top of the system template:

{% set enable_thinking = false %}This permanently disables thinking for that model configuration. The most set-and-forget option, but you'll need to remove it when you actually want thinking enabled.

Worth noting: when thinking mode is working correctly, it genuinely improves output quality on math, logic, and complex reasoning tasks. The issue isn't with the feature itself — it's with LM Studio's UI toggle not reliably controlling it. Once LM Studio patches this (likely in a near-term update), the toggle should work as expected and these workarounds won't be necessary.

Sampling Parameters That Actually Matter

Qwen's team publishes recommended sampling settings for their models. Most people never change these and get decent results. But if you want optimal output, here's what to set in LM Studio's parameter panel:

| Use Case | Temperature | Top-P | Top-K | Presence Penalty |

|---|---|---|---|---|

| General chat (no thinking) | 0.7 | 0.8 | 20 | 1.5 |

| Reasoning (thinking mode) | 0.6 | 0.95 | 20 | 1.5 |

| Coding | 0.7 | 0.8 | 20 | 1.05 |

The critical rule: never use greedy decoding (temperature = 0) with Qwen models. Alibaba explicitly warns against this — it causes repetition loops and degraded output. Even for deterministic tasks, keep temperature at least at 0.6.

Notice the presence penalty of 1.5 for general use. That's unusually high compared to most models, but Qwen's architecture responds well to it — it reduces the circular, repetitive outputs that can plague local models on long conversations. For coding, it drops to 1.05 because code naturally repeats patterns (variable names, function signatures) and heavy penalties break syntax.

LM Studio includes one-click presets in its parameter panel. If the model you loaded came with a recommended configuration file, LM Studio will auto-apply those settings. Check the parameters tab after loading a Qwen model — if the values already match the table above, you're good to go.

Using LM Studio as a Local API Server

This is where LM Studio goes from "nice chat app" to genuinely useful developer tool. With one toggle, it serves an OpenAI-compatible API at localhost:1234 — which means any tool, framework, or coding agent that supports the OpenAI API format works with your local Qwen model out of the box. No cloud costs. No rate limits. No data leaving your machine.

To enable it: click the Developer tab in LM Studio's sidebar, then toggle the server ON. That's it.

Here's a minimal Python example to test the connection:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:1234/v1",

api_key="lm-studio" # any string works

)

response = client.chat.completions.create(

model="qwen3.5-9b",

messages=[{"role": "user", "content": "Explain quantum entanglement simply."}]

)

print(response.choices[0].message.content)

The api_key field accepts any string — there's no authentication on a local server. The model name should match what you have loaded in LM Studio. If you're not sure of the exact name, hit the /v1/models endpoint to list available models.

Real-world use case: point Cline, Continue, or any AI coding assistant at localhost:1234 and you've got a free, private, unlimited coding copilot running Qwen Coder on your own GPU. No API keys to manage, no monthly bills, no code sent to external servers. For the setup details on coding-specific models, check our Qwen Coder page.

From the Community

Here's what the LM Studio team has shared about Qwen support:

Qwen3 is available on LM Studio in GGUF and MLX! Sizes: 0.6B, 1.7B, 4B, 8B, 14B, 30B MoE, 32B, and 235B MoE

— LM Studio (@lmstudio) April 28, 2025

The Qwen 3 launch brought full GGUF and MLX support across the entire model lineup. With Qwen 3.5, LM Studio extended that coverage to the new MoE variants — though the 35B-A3B initially required a beta runtime update before it would load correctly.

Qwen3-Coder with tool calling supported in LM Studio 0.3.20. 480B params, 35B active. ~250GB to run locally.

— LM Studio (@lmstudio) July 20, 2025

Tool calling support for Qwen3-Coder opened up local agentic workflows — 480B parameters with only 35B active per forward pass. You'll need around 250 GB to run it, so this one's reserved for Mac Studios with maxed-out unified memory or multi-GPU desktop setups.

Frequently Asked Questions

Should I use GGUF or MLX on my Mac?

MLX. Always. It's 25-50% faster for token generation, uses roughly half the memory, and processes prompts 3-5x faster. The only exception is if you need a quantization format that MLX doesn't support — but for Qwen models, MLX covers 4-bit through BF16, which handles every practical use case. See the full comparison above.

My model won't load — what's wrong?

Almost always a RAM issue. LM Studio auto-suggests models that fit your available memory, but if you manually downloaded a large model, it might exceed what your system can handle. Check the model size table above and compare against your free RAM (not total — free). On Macs, close memory-heavy apps first. On Windows, you need enough VRAM on your GPU. Use Can I Run Qwen? to check your specific hardware.

How do I disable thinking mode?

The UI toggle is unreliable for Qwen 3.5 models right now. Use one of the three workarounds in the thinking mode section: CLI config override, API parameter, or template edit. The CLI method (lms chat --config '{"enable_thinking": false}') is the quickest fix.

Can I use LM Studio as an API for coding agents?

Yes — and it's one of the best reasons to use LM Studio over simpler chat interfaces. Toggle the server on in the Developer tab, point your coding tool at http://localhost:1234/v1, and you've got a local, private, OpenAI-compatible API. Works with Cline, Continue, aider, and anything else that supports the OpenAI format. See the API server section for a working code example.

What's the best Qwen model to start with in LM Studio?

The Qwen 3.5 9B. It fits in 7 GB of RAM, runs fast on Apple Silicon and most discrete GPUs, and its reasoning quality is remarkably strong for a model this size. Start there. If it feels limiting after a week, step up to the 27B — but for most users, the 9B is more than enough. If you're specifically doing code work, grab Qwen2.5-Coder-7B instead.

How does LM Studio compare to Ollama?

LM Studio gives you a visual interface, model browsing, parameter adjustment, and an API server — all in one app. Ollama is CLI-first, lighter weight, and easier to script. If you want a GUI and prefer clicking over typing commands, LM Studio is the better fit. If you want a background service that just serves models via API, Ollama might be simpler. Both use llama.cpp under the hood for GGUF models, so raw performance on Windows and Linux is similar. On Mac, LM Studio's MLX support gives it a significant speed advantage.